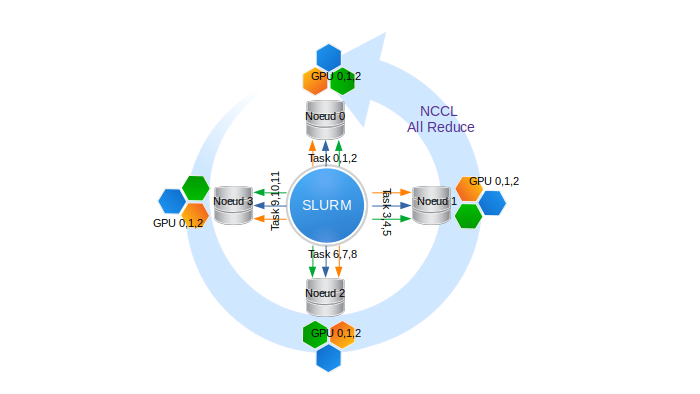

Multi-Node Multi-GPU Comprehensive Working Example for PyTorch Lightning on AzureML | by Joel Stremmel | Medium

the imagenet main when is use multi gpu(not set gpu args) then the input will not call input.cuda() why? · Issue #481 · pytorch/examples · GitHub

Accessible Multi-Billion Parameter Model Training with PyTorch Lightning + DeepSpeed | by PyTorch Lightning team | PyTorch Lightning Developer Blog